If Not React, Then What?

Frameworkism isn't delivering. The answer isn't a different tool, it's the courage to do engineering.

Over the past decade, my work has centred on partnering with teams to build ambitious products for the web across both desktop and mobile. This has provided a ring-side seat to a sweeping variety of teams, products, and technology stacks across more than 100 engagements.

While I'd like to be spending most of this time working through improvements to web APIs, the majority of time spent with partners goes to remediating performance and accessibility issues caused by "modern" frontend frameworks (React, Angular, etc.) and the culture surrounding them. These issues are most pronounced today in React-based stacks.

This is disquieting because React is legacy technology, but it continues to appear in greenfield applications.

Surprisingly, some continue to insist that React is "modern." Perhaps we can square the circle if we understand "modern" to apply to React in the way it applies to art. Neither demonstrate contemporary design or construction techniques. They are not built to meet current needs or performance standards, but stand as expensive objets that harken back to the peak of an earlier era's antiquated methods.

In the hope of steering the next team away from the rocks, I've found myself penning advocacy pieces and research into the state of play, as well as giving talks to alert managers and developers of the dangers of today's misbegotten frontend orthodoxies.

In short, nobody should start a new project in the 2020s based on React. Full stop.[1]

The Rule Of Least Client-Side Complexity #

Code that runs on the server can be fully costed. Performance and availability of server-side systems are under the control of the provisioning organisation, and latency can be actively managed by developers and DevOps engineers.

Code that runs on the client, by contrast, is running on The Devil's Computer.[2] Nothing about the experienced latency, client resources, or even available APIs are under the developer's control.

Client-side web development is perhaps best conceived of as influence-oriented programming. Once code has left the datacenter, all a web developer can do is send thoughts and prayers.

As a result, an unreasonably effective strategy is to send less code. In practice, this means favouring HTML and CSS over JavaScript, as they degrade gracefully and feature higher compression ratios. Declarative forms generate more functional UI per byte sent. These improvements in resilience and reductions in costs are beneficial in compounding ways over a site's lifetime.

Stacks based on React, Angular, and other legacy-oriented, desktop-focused JavaScript frameworks generally take the opposite bet. These ecosystems pay lip service the controls that are necessary to prevent horrific profliferations of unnecessary client-side cruft. The predictable consequence are NPM-algamated bundles full of redundancies like core-js, lodash, underscore, polyfills for browsers that no longer exist, userland ECC libraries, moment.js, and a hundred other horrors.

This culture is so out of hand that it seems 2024's React developers are constitutionally unable to build chatbots without including all of these 2010s holdovers, plus at least one extremely chonky MathML or TeX formatting library to display formulas; something needed in vanishingly few sessions.

Tech leads and managers need to break this spell and force ownership of decisions affecting the client. In practice, this means forbidding React in all new work.

OK, But What, Then? #

This question comes in two flavours that take some work to tease apart:

- The narrow form:

"Assuming we have a well-qualified need for client-side rendering, what specific technologies would you recommend instead of React?" - The broad form:

"Our product stack has bet on React and the various mythologies that the cool kids talk about on React-centric podcasts. You're asking us to rethink the whole thing. Which silver bullet should we adopt instead?"

Teams that have grounded their product decisions appropriately can productively work through the narrow form by running truly objective bakeoffs. Building multiple small PoCs to determine each approach's scaling factors and limits can even be a great deal of fun.[3] It's the rewarding side of real engineering, trying out new materials under well-understood constraints to improve user outcomes.

Note: Developers building SPAs or islands of client-side interactivity are spoilt for choice. This blog won't recommend a specific tool, but Svelte, Lit, FAST, Solid, Qwik, Marko, HTMX, Vue, Stencil, and a dozen other contemporary frameworks are worthy of your attention.

Despite their lower initial costs, teams investing in any of them will still require strict controls on client-side payloads and complexity, as JavaScript remains at least 3x more expensive than equivalent HTML and CSS, byte-for-byte.

In almost every case, the constraints on tech stack decisions have materially shifted since they were last examined, or the realities of a site's user base are vastly different than product managers and tech leads expect. Gathering data on these factors allows for first-pass cuts about stack choices, winnowing quickly to a smaller set of options to run bakeoffs for.[4]

But the teams we spend the most time with aren't in that position.

Many folks asking "if not React, then what?" think they're asking in the narrow form but are grappling with the broader version. A shocking fraction of (decent, well-meaning) product managers and engineers haven't thought through the whys and wherefores of their architectures, opting instead to go with what's popular in a sort of responsibility fire brigade.[5]

For some, provocations to abandon React create an unmoored feeling, a suspicion that they might not understand the world any more.[6] Teams in this position are working through the epistemology of their values and decisions.[7] How can they know their technology choices are better than the alternatives? Why should they pick one stack over another?

Many need help orienting themselves as to which end of the telescope is better for examining frontend problems. Frameworkism is now the dominant creed of frontend discourse. It insists that all user problems will be solved if teams just framework hard enough. This is non-sequitur, if not entirely backwards. In practice, the only thing that makes web experiences good is caring about the user experience — specifically, the experience of folks at the margins. Technologies come and go, but what always makes the difference is giving a toss about the user.

In less vulgar terms, the struggle is to convince managers and tech leads that they need to start with user needs. Or as Public Digital puts it, "design for user needs, not organisational convenience"

The essential component of this mindset shift is replacing hopes based on promises with constraints based on research and evidence. This aligns with what it means to commit wanton acts of engineering because engineering is the practice of designing solutions to problems for users and society under known constraints.

The opposite of engineering is imagining that constraints do not exist or do not apply to your product. The shorthand for this is "bullshit."

Rejecting an engrained practice of bullshitting does not come easily. Frameworkism preaches that the way to improve user experiences is to adopt more (or different) tooling from the framework's ecosystem. This provides adherents with something to do that looks plausibly like engineering, except it isn't. It can even become a totalising commitment; solutions to user problems outside the framework's expanded cinematic universe are unavailable to the frameworkist. Non-idiomatic patterns that unlock significant wins for users are bugs to be squashed. And without data or evidence to counterbalance bullshit artists's assertions, who's to say they're wrong? Orthodoxy unmoored from measurements of user outcomes predictably spins into abstruse absurdities. Heresy, eventually, is perceived to carry heavy sanctions.

It's all nonsense.

Realists do not wallow in abstraction-induced hallucinations about user experiences; they measure them. Realism requires reckoning with the world as it is, not as we wish it to be, and in that way, it's the opposite of frameworkism.

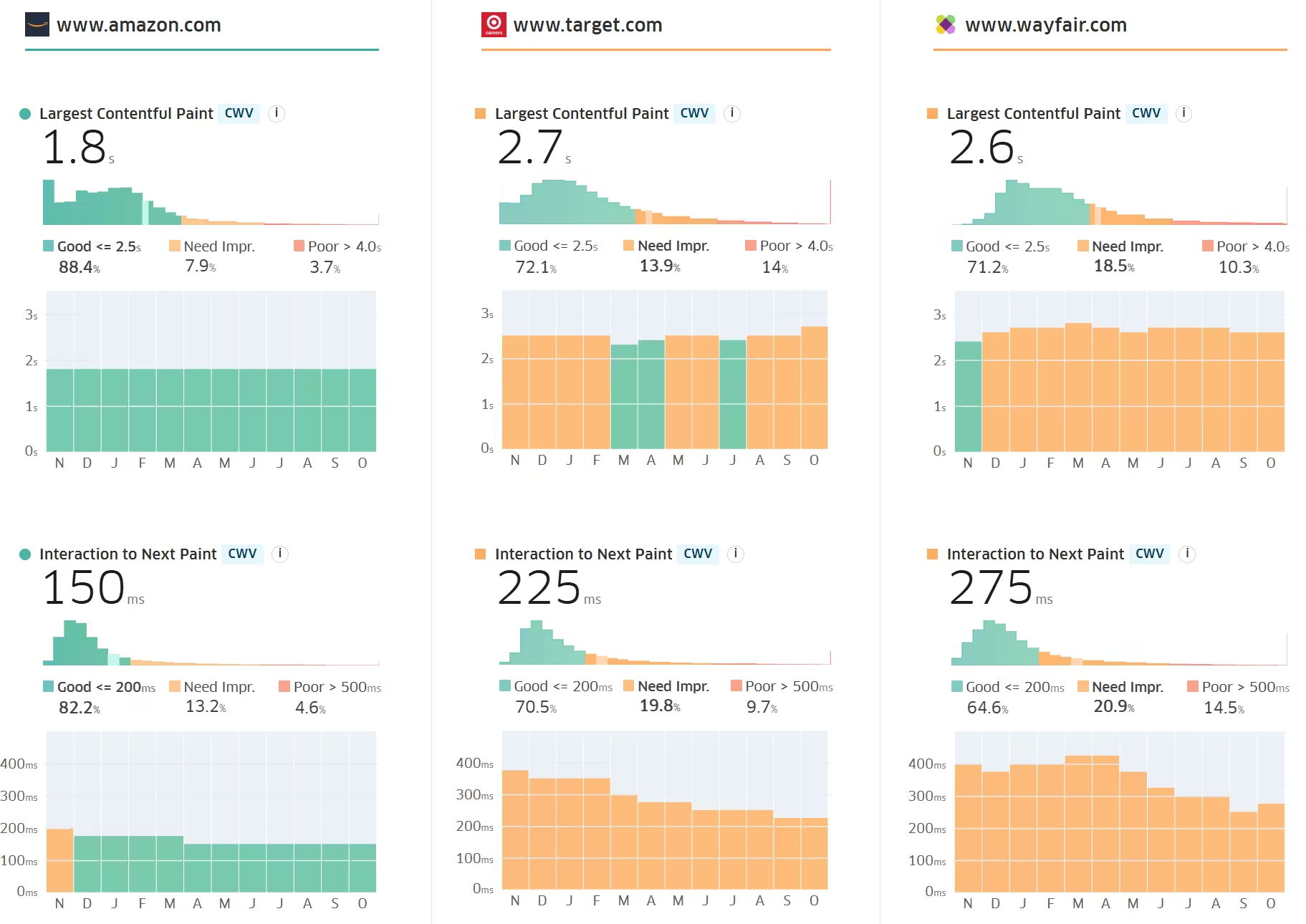

The most effective tools for breaking this spell are techniques that give managers a user-centred view of their system's performance. This can take the form of RUM data, such as Core Web Vitals (check yours now!), or lab results from well-configured test-benches (e.g., WPT). Instrumenting critical user journeys and talking through business goals are quick follow-ups that enable teams to seize the momentum and formulate business cases for change.

RUM and bench data sources are essential antidotes to frameworkism because they provide data-driven baselines to argue about. Instead of accepting the next increment of framework investment on faith, teams armed with data can begin to weigh up the actual costs of fad chasing versus likely returns.

And Nothing Of Value Was Lost #

Prohibiting the spread of React (and other frameworkist totems) by policy is both an incredible cost-saving tactic and a helpful way to reorient teams towards delivery for users. However, better results only arrive once frameworkism itself is eliminated from decision-making. Avoiding one class of mistake won't pay dividends if we spend the windfall on investments within the same error category.

A general answer to the broad form of the problem has several parts:

- User focus: decision-makers must accept that they are directly accountable for the results of their engineering choices. No buck-passing is allowed. Either the system works well for users,[8] including those at the margins, or it doesn't. Systems that are not performing are to be replaced with versions that do. There are no sacred cows, only problems to be solved with the appropriate application of constraints.

- Evidence: the essential shared commitment between management and engineering is a dedication to realism. Better evidence must win.

- Guardrails: policies must be implemented to ward off hallucinatory frameworkist assertions about how better experiences are delivered. Good examples of this include the UK Government Digital Service's requirement that services be built using progressive enhancement techniques. Organisations can tweak guidance as appropriate (e.g., creating an escalation path for exceptions), but the important thing is to set a baseline. Evidence boiled down into policy has power.

- Bakeoffs: no new system should be deployed without a clear set of critical user journeys. Those journeys embody what we expect users to do most frequently in our systems, and once those definitions are in hand, we can do bakeoffs to test how well various systems deliver, given the constraints of the expected marginal user. This process description puts the product manager's role into stark relief. Instead of suggesting an endless set of experiments to run (often poorly), they must define a product thesis and commit to an understanding of what success means. This will be uncomfortable. It's also the job. Graciously accept the resignations of PMs who decide managing products is not in their wheelhouse.

Vignettes #

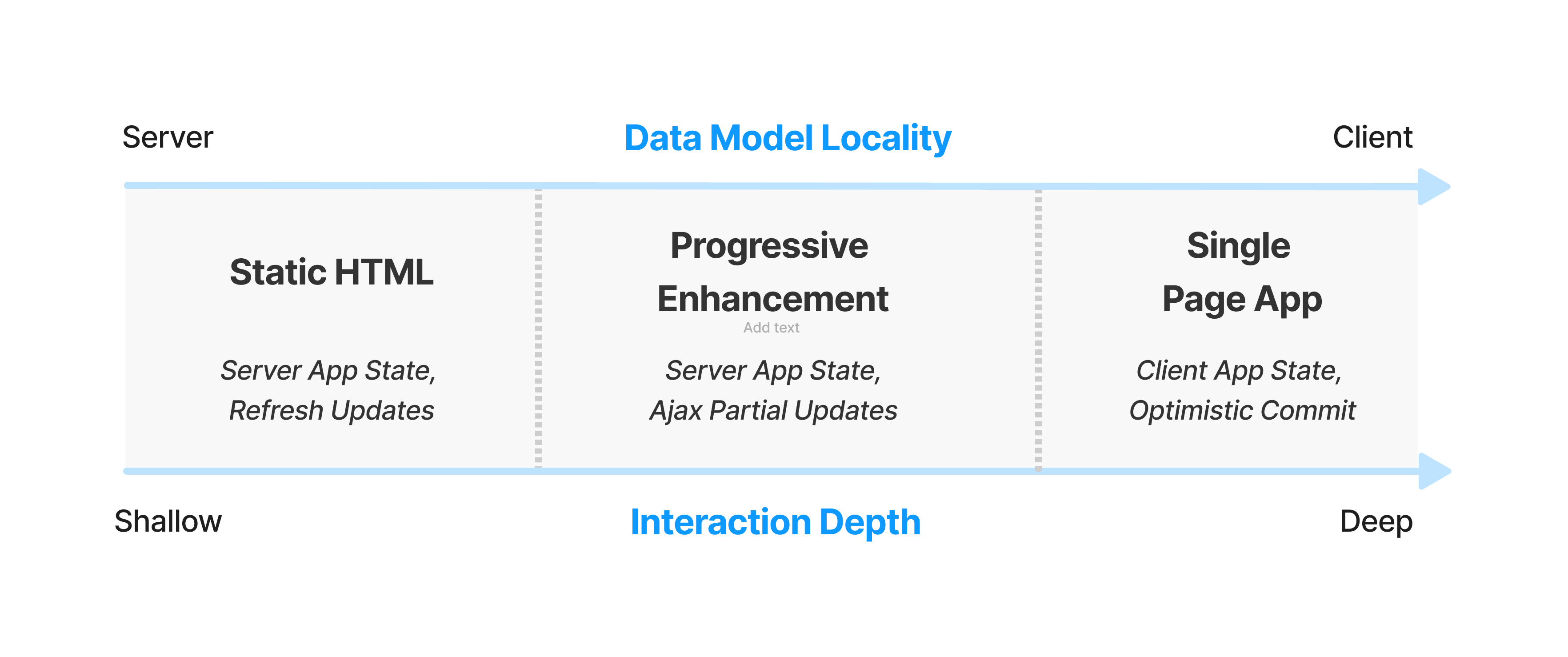

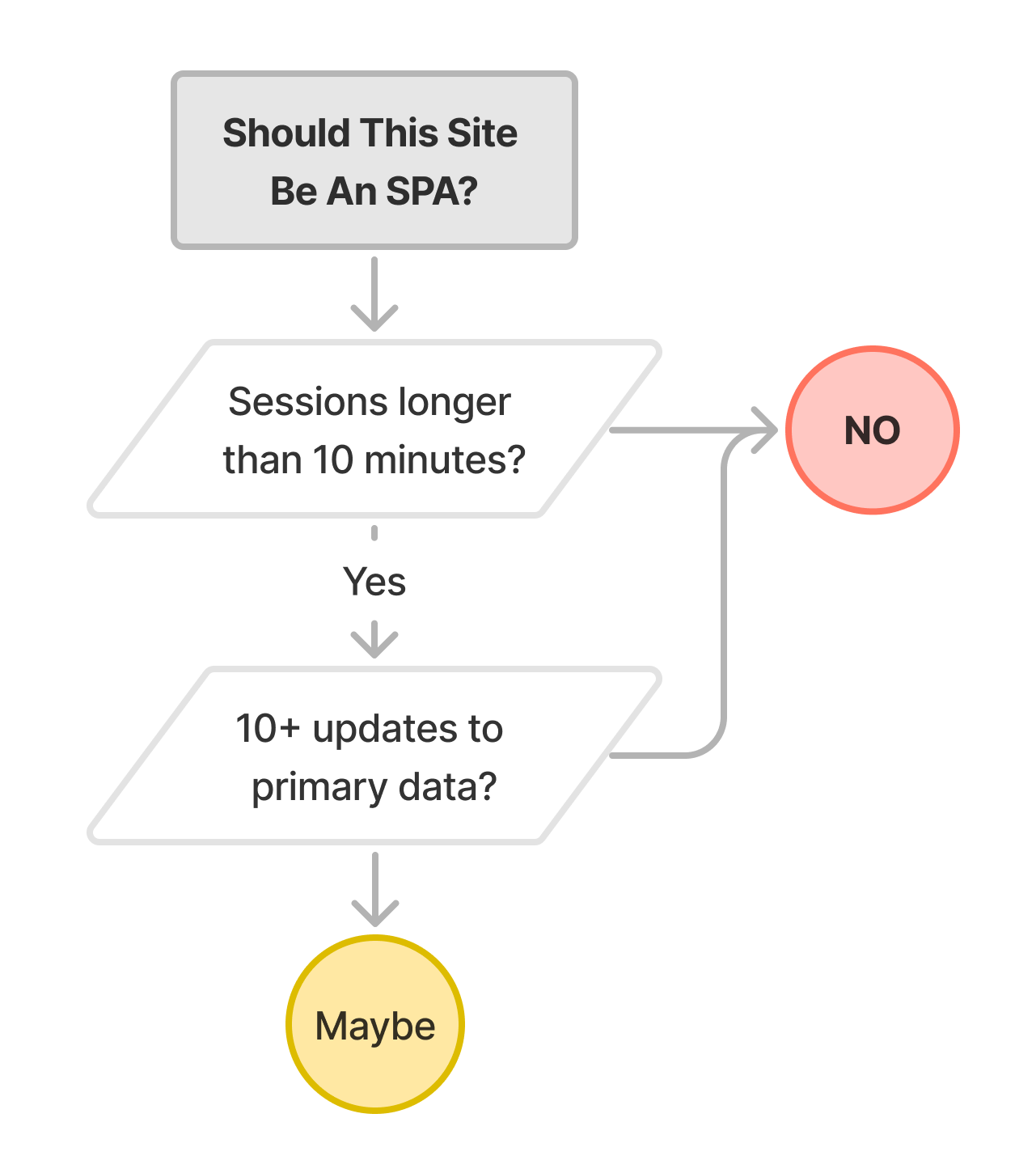

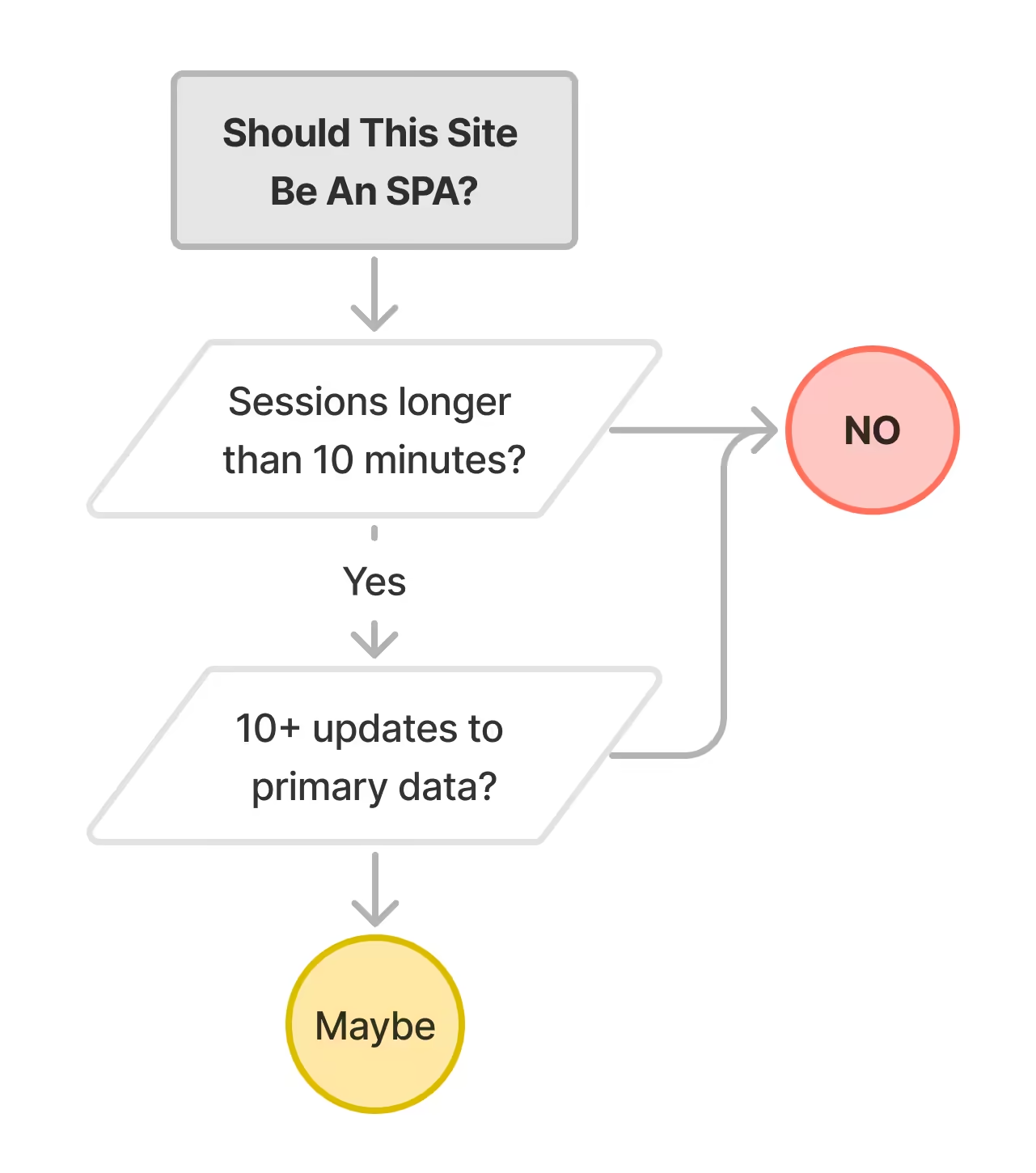

To see how realism and frameworkism differ in practice, it's helpful to work a few examples. As background, recall that our rubric[9] for choosing technologies is based on the number of manipulations of primary data (updates) and session length. Some classes of app feature long sessions and many incremental updates to the primary information of the UI. In these (rarer) cases, a local data model can be helpful in supporting timely application of updates, but this is the exception.

It's only in these exceptional instances that SPA architectures should be considered.

And only when an SPA architecture is required should tools designed to support optimistic updates against a local data model — including "frontend frameworks" and "state management" tools — ever become part of a site's architecture.

The choice isn't between JavaScript frameworks, it's whether SPA-oriented tools should be entertained at all.

For most sites, the answer is clearly "no".

Informational #

Sites built to inform should almost always be built using semantic HTML with optional progressive enhancement as necessary.

Static site generation tools like Hugo, Astro, 11ty, and Jekyll work well for many of these cases. Sites that have content that changes more frequently should look to "classic" CMSes or tools like WordPress to generate HTML and CSS.

Blogs, marketing sites, company home pages, public information sites, and the like should minimise client-side JavaScript payloads to the greatest extent possible. They should never be built using frameworks that are designed to enable SPA architectures.[10]

Why Semantic Markup and Optional Progressive Enhancement Are The Right Choice #

Informational sites have short sessions and server-owned application data models; that is, the source of truth for what's displayed on the page is always the server's to manage and own. This means that there is no need for a client-side data model abstraction or client-side component definitions that might be updated from such a data model.

Note: many informational sites include productivity components as distinct sub-applications. CMSes such as Wordpress are comprised of two distinct surfaces; a low-traffic, high-interactivity editor for post authors, and a high-traffic, low-interactivity viewer UI for readers. Progressive enhancement should be considered for both, but is an absolute must for reader views which do not feature long sessions.[9:1]

E-Commerce #

E-commerce sites should be built using server-generated semantic HTML and progressive enhancement.

Many tools are available to support this architecture. Teams building e-commerce experiences should prefer stacks that deliver no JavaScript by default, and buttress that with controls on client-side script to prevent regressions in material business metrics.

Why Progressive Enhancement Is The Right Choice #

The general form of e-commerce sites has been stable for more than 20 years:

- Landing pages with current offers and a search function for finding products.

- Search results pages which allow for filtering and comparison of products.

- Product-detail pages that host media about products, ratings, reviews, and recommendations for alternatives.

- Cart management, checkout, and account management screens.

Across all of these page types, a pervasive login and cart status widget will be displayed. This widget, and the site's logo, are sometimes the only consistent elements.

Long experience has demonstrated low UI commonality, highly variable session lengths, and the importance of fresh content (e.g., prices) in e-commerce. These factors argue for server-owned application state. The best way to reduce latency is to optimise for lightweight pages. Aggressive caching, image optimisation, and page-weight reduction strategies all help.

Media #

Media consumption sites vary considerably in session length and data update potential. Most should start as progressively-enhanced markup-based experiences, adding complexity over time as product changes warrant it.

Why Progressive Enhancement and Islands May Be The Right Choice #

Many interactive elements on media consumption sites can be modeled as distinct islands of interactivity (e.g., comment threads). Many of these components present independent data models and can therefore be modeled as Web Components within a larger (static) page.

When An SPA May Be Appropriate #

This model breaks down when media playback must continue across media browsing (think "mini-player" UIs). A fundamental limitation of today's web platform is that it is not possible to preserve some elements from a page across top-level navigations. Sites that must support features like this should consider using SPA technologies while setting strict guardrails for the allowed size of client-side JS per page.

Another reason to consider client-side logic for a media consumption app is offline playback. Managing a local (Service Worker-backed) media cache requires application logic and a way to synchronise information with the server.

Lightweight SPA-oriented frameworks may be appropriate here, along with connection-state resilient data systems such as Zero or Y.js.

Social #

Social media apps feature significant variety in session lengths and media capabilities. Many present infinite-scroll interfaces and complex post editing affordances. These are natural dividing lines in a design that align well with session depth and client-vs-server data model locality.

Why Progressive Enhancement May Be The Right Choice #

Most social media experiences involve a small, fixed number of actions on top of a server-owned data model ("liking" posts, etc.) as well as distinct update phase for new media arriving at an interval. This model works well with a hybrid approach as is found in Hotwire and many HTMX applications.

When An SPA May Be Appropriate #

Islands of deep interactivity may make sense in social media applications, and aggressive client-side caching (e.g., for draft posts) may aid in building engagement. It may be helpful to think of these as unique app sections with distinct needs from the main site's role in displaying content.

Offline support may be another reason to download a data model and a snapshot of user state to the client. This should be done in conjunction with an approach that builds resilience for the main application. Teams in this situation should consider a Service Worker-based, multi-page app with "stream stitching" architecture which primarily delivers HTML to the page but enables offline-first logic and synchronisation. Because offline support is so invasive to an architecture, this requirement must be identified up-front.

Note: Many assume that SPA-enabling tools and frameworks are required to build compelling Progressive Web Apps that work well offline. This is not the case. PWAs can be built using stream-stitching architectures that apply the equivalent of server-side templating to data on the client, within a Service Worker.

With the advent of multi-page view transitions, MPA architecture PWAs can present fluid transitions between user states without heavyweight JavaScript bundles clogging up the main thread. It may take several more years for the framework community to digest the implications of these technologies, but they are available today and work exceedingly well, both as foundational architecture pieces and as progressive enhancements.

Productivity #

Document-centric productivity apps may be the hardest class to reason about, as collaborative editing, offline support, and lightweight (and fast) "viewing" modes with full document fidelity are all general product requirements.

Triage-oriented data stores (e.g. email clients) are also prime candidates for the potential benefits of SPA-based technology. But as with all SPAs, the ability to deliver a better experience hinges both on session depth and up-front payload cost. It's easy to lose this race, as this blog has examined in the past.

Editors of all sorts are a natural fit for local data models and SPA-based architectures to support modifications to them. However, the endemic complexity of these systems ensures that performance will remain a constant struggle. As a result, teams building applications in this style should consider strong performance guardrails, identify critical user journeys up-front, and ensure that instrumentation is in place to ward off unpleasant performance surprises.

Why SPAs May Be The Right Choice #

Editors frequently feature many updates to the same data (e.g., for every keystroke or mouse drag). Applying updates optimistically and only informing the server asynchronously of edits can deliver a superior experience across long editing sessions.

However, teams should be aware that editors may also perform double duty as viewers and that the weight of up-front bundles may not be reasonable for both cases. Worse, it can be hard to tease viewing sessions apart from heavy editing sessions at page load time.

Teams that succeed in these conditions build extreme discipline about the modularity, phasing, and order of delayed package loading based on user needs (e.g., only loading editor components users need when they require them). Teams that get stuck tend to fail to apply controls over which team members can approve changes to critical-path payloads.

Other Application Classes #

Some types of apps are intrinsically interactive, focus on access to local device hardware, or center on manipulating media types that HTML doesn't handle intrinsically. Examples include 3D CAD systems, programming editors, game streaming services), web-based games, media-editing, and music-making systems. These constraints often make complex, client-side JavaScript-based UIs a natural fit, but each should be evaluated in a similarly critical style as when building productivity applications:

- What are the critical user journeys?

- How deep will average sessions be?

- What metrics will we track to ensure that performance remains acceptable?

- How will we place tight controls on critical-path script and other resources?

Success in these app classes is possible on the web, but extreme care is required.

A Word On Enterprise Software: Some of the worst performance disasters I've helped remediate are from a cateogry we can think of, generously, as "enterprise line-of-business apps". Dashboards, worfklow systems, corporate chat apps, that sort of thing.

Teams building these experiences frequently assert that "startup performance isn't that important because people start our app in the morning and keep it open all day". At the limit, this can be true, but what they omit is that performance is cultural. Teams that fails to define and measure critical user journeys, include loading, will absolutely fail to define and measure interactivity post-load.

The old saying "how you do anything is how you do everything" is never more true than in software usability.

One consequence of cultures that fail to put the user first are products whose usability is so poor that attributes which didn't matter at the time of sale (like performance) become reasons to switch.

If you've ever had the distinct displeasure of using Concur or Workday, you'll understand what I mean. Challengers win business from these incumbents not by being wonderful, but simply by being usable. The incumbents are powerless to respond because their problems are now rooted deeply in the behaviours they rewarded through hiring and promotion along the way. The resulting management blindspot becomes a self-reinforcing norm that no single leader can shake.

This is why it's caustic to product usability and brand value to allow a culture of disrespect towards users in favour of developer veneration. The only antidote is to stamp it out wherever it arises by demanding user-focused realism in decisiomaking.

"But..." #

Managers and tech leads that have become wedded to frameworkism often have to work through a series of easily falsified rationales offered by other Over Reactors in service of their chosen ideology. Note, as you read, that none of these protests put the lived user experience front-and-centre. This admission by omission is a reliable property of the sorts of conversations that these sketches are drawn from.

"...we need to move fast" #

This chestnut should always be answered with a question: "for how long?"

This is because the dominant outcome of fling-stuff-together-with-NPM, feels-fine-on-my-$3K-laptop development is to cause teams to get stuck in the mud much sooner than anyone expects. From major accessibility defects to brand-risk levels of lousy performance, the consequence of this sort of thinking has been crossing my desk every week for a decade now.

The one thing I can tell you that all of these teams and products have in common is that they are not moving faster. Brands you've heard of and websites you used this week have come in for help, which we've dutifully provided. The general prescription is spend a few weeks/months unpicking this Gordian knot of JavaScript. The time spent in remediation does fix the revenue and accessibility problems that JavaScript exuberance cause, but teams are dead in the water while they belatedly add ship gates and bundle size controls and processes to prevent further regression.

This necessary, painful, and expensive remediation generally comes at the worst time and with little support, owing to the JS-industrial-complex's omerta. Managers trapped in these systems experience a sinking realisation that choices made in haste are not so easily revised. Complex, inscrutable tools introduced in the "move fast" phase are now systems that teams must dedicate time to learn, understand deeply, and affrimatively operate. All the while the pace of feature delivery is dramatically reduced.

This isn't what managers think they're signing up for when acccepting "but we need to move fast!"

But let's take the assertion at face value and assume a team that won't get stuck in the ditch (🤞): the idea embedded in this statemet is, roughly, that there isn't time to do it right (so React?), but there will be time to do it over.

But this is in direct contention with identifying product-market-fit.

Contra the received wisdom of valley-dwellers, the way to find who will want your product is to make it as widely available as possible, then to add UX flourishes.

Teams I've worked with are frequently astonished to find that removing barriers to use opens up new markets, or improves margins and outcomes in parts of a world they had under-valued.

Now, if you're selling Veblen goods, by all means, prioritise anything but accessibility. But in literally every other category, the returns to quality can be best understood as clarity of product thesis. A low-quality experience — which is what is being proposed when React is offered as an expedient — is a drag on the core growth argument for your service. And if the goal is scale, rather than exclusivity, building for legacy desktop browsers that Microsoft won't even sell you is a strategic error.

"...it works for Facebook" #

To a statistical certainty, you aren't making Facebook. Your problems likely look nothing like Facebook's early 2010s problems, and even if they did, following their lead is a terrible idea.

And these tools aren't even working for Facebook. They just happen to be a monopoly in various social categories and so can afford to light money on fire. If that doesn't describe your situation, it's best not to overindex on narratives premised on Facebook's perceived success.

"...our teams already know React" #

React developers are web developers. They have to operate in a world of CSS, HTML, JavaScript, and DOM. It's inescapable. This means that React is the most fungible layer in the stack. Moving between templating systems (which is what JSX is) is what web developers have done fluidly for more than 30 years. Even folks with deep expertise in, say, Rails and ERB, can easily knock out Django or Laravel or Wordpress or 11ty sites. There are differences, sure, but every web developer is a polyglot.

React knowledge is also not particularly valuable. Any team familiar with React's...baroque...conventions can easily master Preact, Stencil, Svelte, Lit, FAST, Qwik, or any of a dozen faster, smaller, reactive client-side systems that demand less mental bookkeeping.

"...we need to be able to hire easily" #

The tech industry has just seen many of the most talented, empathetic, and user-focused engineers I know laid off for no reason other than their management couldn't figure out that there would be some mean reversion post-pandemic. Which is to say, there's a fire sale on talent right now, and you can ask for whatever skills you damn well please and get good returns.

If you cannot attract folks who know web standards and fundamentals, reach out. I'll personally help you formulate recs, recruiting materials, hiring rubrics, and promotion guides to value these folks the way you should: as underpriced heroes that will do incredible good for your products at a fraction of the cost of solving the next problem the React community is finally acknowledging that frameworkism itself caused.

Resumes Aren't Murder/Suicide Pacts #

Even if you decide you want to run interview loops to filter for React knowledge, that's not a good reason to use it! Anyone who can master the dark thicket of build tools, typescript foibles, and the million little ways that JSX's fork of HTML and JavaScript syntax trips folks up is absolutely good enough to work in a different system.

Heck, they're already working in an ever-shifting maze of faddish churn. The treadmill is real, which means that the question isn't "will these folks be able to hit the ground running?" (answer: no, they'll spend weeks learning your specific setup regardless), it's "what technologies will provide the highest ROI over the life of our team?"

Given the extremely high costs of React and other frameworkist prescriptions, the odds that this calculus will favour the current flavour of the week over the lifetime of even a single project are vanishingly small.

The Bootcamp Thing #

It makes me nauseous to hear managers denigrate talented engineers, and there seems to be a rash of it going around. The idea that folks who come out of bootcamps — folks who just paid to learn whatever was on the syllabus — aren't able or willing to pick up some alternative stack is bollocks.

Bootcamp grads might be junior, and they are generally steeped in varying strengths of frameworkism, but they're not stupid. They want to do a good job, and it's management's job to define what that is. Many new grads might know React, but they'll learn a dozen other tools along the way, and React is by far the most (unnecessarily) complex of the bunch. The idea that folks who have mastered the horrors of useMemo and friends can't take on board DOM lifecycle methods or the event loop or modern CSS is insulting. It's unfairly stigmatising and limits the organisation's potential.

In other words, definitionally atrocious management.

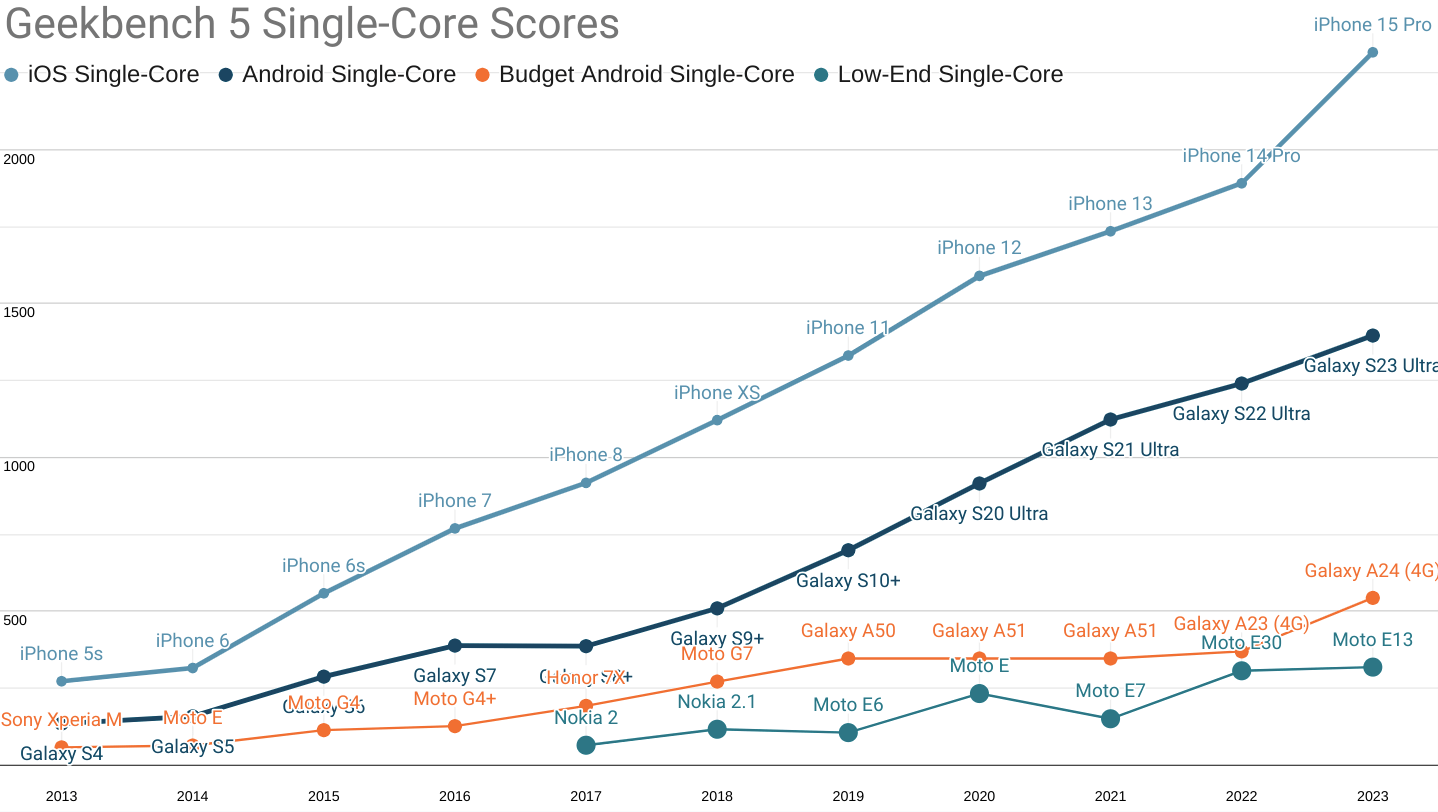

"...everyone has fast phones now" #

For more than a decade, the core premise of frameworkism has been that client-side resources are cheap (or are getting increasingly inexpensive) and that it is, therefore, reasonable to trade some end-user performance for developer convenience.

This has been an absolute debacle. Since at least 2012 onward, the rise of mobile falsified this contention, and (as this blog has meticulously catalogued) we are only just starting to turn the corner.

Frameworkist assertions that "everyone has fast phones" is many things, but first and foremost it's an admission that the folks offering this out don't know what they're talking about, and they hope you don't either.

No business trying to make it on the web can afford what these folks are selling, and you are under no obligation to offer your product as sacrifice to a false god.

"...React is industry-standard" #

This is, at best, a comforting fiction.

At worst, it's a knowing falsity that serves to omit the variability in React-based stacks because, you see, React isn't one thing. It's more of a lifestyle, complete with choices to make about React itself (function components or class components?) languages and compilers (typescript or nah?), package managers and dependency tools (npm? yarn? pnpm? turbo?), bundlers (webpack? esbuild? swc? rollup?), meta-tools (vite? turbopack? nx?), "state management" tools (redux? mobx? apollo? something that actually manages state?) and so on and so forth. And that's before we discuss plugins to support different CSS transpilation or the totally optional side-quests that frameworkists have led many of the teams I've consulted with down; "CSS-in-JS" being one particularly risible example.

Across more than 100 consulting engagements, I've never seen two identical React setups, save smaller cases where folks had yet to change the defaults of Create React App (which itself changed dramatically over the years before it finally being removed from the React docs as the best way to get started).

There's nothing standard about any of this. It's all change, all the time, and anyone who tells you differently is not to be trusted.

The Bare (Assertion) Minimum #

Hopefully, you'll forgive a digression into how the "React is industry standard" misdirection became so embedded.

Given the overwhelming evidence that this stuff isn't even working on the sites of the titular React poster children, how did we end up with React in so many nooks and crannies of contemporary frontend?

Pushy know-it-alls, that's how. Frameworkists have a way of hijacking every conversation with bare assertions like "virtual DOM means it's fast" without ever understanding anything about how browsers work, let alone the GC costs of their (extremely chatty) alternatives. This same ignorance allows them to confidently assert that React is "fine" when cheaper alternatives exist in every dimension.

These are not serious people. You do not have to take them seriously. But you do have to oppose them and create data-driven structures that put users first. The long-term costs of these errors are enormous, as witnessed by the parade of teams needing our help to achieve minimally decent performance using stacks that were supposed to be "performant" (sic).

"...the ecosystem..." #

Which part, exactly? Be extremely specific. Which packages are so valuable, yet wedded entirely to React, that a team should not entertain alternatives? Do they really not work with Preact? How much money exactly is the right amount to burn to use these libraries? Because that's the debate.

Even if you get the benefits of "the ecosystem" at Time 0, why do you think that will continue to pay out?

Every library is presents stochastic risk of abandoment. Even the most heavily used systems fall out of favour with the JS-industrial-complex's in-crowd, stranding you in the same position as you'd have been in if you accepted ownership of more of your stack up-front, but with less experience and agency. Is that a good trade? Does your boss agree?

And if you don't mind me asking, how's that "CSS-in-JS" adventure working out? Still writing class components, or did you have a big forced (and partial) migration that's still creating headaches?

The truth is that every single package that is part of a repo's devDependencies is, or will be, fully owned by the consumer of the package. The only bulwark against uncomfortable surprises is to consider NPM dependencies a high-interest loan collateralized by future engineering capacity.

The best way to prevent these costs spiralling out of contorl is to fully examine and approve each and every dependency for UI tools and build systems. If your team is not comfortable agreeing to own, patch, and improve every single one of those systems, they should not be part of your stack.

"...Next.js can be fast (enough)" #

Do you feel lucky, punk? Do you?

Because you'll have to be lucky to beat the odds.

Sites built with Next.js perform materially worse than those from HTML-first systems like 11ty, Astro, et al.

It simply does not scale, and the fact that it drags React behind it like a ball and chain is a double demerit. The chonktastic default payload of delay-loaded JS in any Next.js site will compete with ads and other business-critical deferred content for bandwidth, and that's before any custom components or routes are added. Even when using React Server Components. Which is to say, Next.js is a fast way to lose a lot of money while getting locked in to a VC-backed startup's proprietary APIs.

Next.js starts bad and only gets worse from a shocking baseline. No wonder the only Next sites that seem to perform well are those that enjoy overwhelmingly wealthy userbases, hand-tuning assistance from Vercel, or both.

So, do you feel lucky?

"...React Native!" #

React Native is a good way to make a slow app that requires constant hand-tuning and an excellent way to make a terrible website. It has also been abandoned by it's poster children.

Companies that want to deliver compelling mobile experiences into app stores from the same codebase as their web site are better served investigating Trusted Web Activities and PWABuilder. If those don't work, Capacitor and Cordova can deliver similar benefits. These approaches make most native capabilities available, but centralise UI investment on the web side, providing visibility and control via a single execution path. This, in turn, reduces duplicate optimisation and accessibility headaches.

References #

These are essential guides for frontend realism. I recommend interested tech leads, engineering managers, and product managers digest them all:

- "Building a robust frontend using progressive enhancement" from the UK's Government Digital Service.

- "JavaScript dos and donts" by Github alumnus Mu-An Chiou.

- "Choosing Your Stack" from Cancer Research UK

- "The Monty Hall Rewrite" by Alex Sexton, which breaks down the essential ways that a failure to run an honest bakeoff harms decision-making.

- "Things you forgot (or never knew) because of React" by Josh Collinsworth, which enunciates just how baroque and parochial React's culture has become.

- "The Frontend Treadmill" by Marco Rogers explains the costs of frameworkism better than I ever could.

- "Questions for a new technology" by Kellan Elliott-McCrea and Glyph's "Against Innovation Tokens". Together, they set a well-focused lens for thinking about how frameworkism is antithetical to functional engineering culture.

These pieces are from teams and leaders that have succeeded in outrageously effective ways by applying the realist tentants of looking around for themselves and measuring. I wish you the same success.

Thanks to Mu-An Chiou, Hasan Ali, Josh Collinsworth, Ben Delarre, Katie Sylor-Miller, and Mary for their feedback on drafts of this post.

Why not React? Dozens of reasons, but a shortlist must include:

- React is legacy technology. It was built for a world where IE 6 still had measurable share, and it shows.

- React's synthetic event system and hard-coded element list are a direct consequence of IE's limitations. Independently, these create portability and performance hazards. Together, they become a driver of lock-in.

- No contemporary framework contains equivalents because no other framework is fundamentally designed around the need to support IE.

- It's beating a dead horse, but Microsoft's flagship apps do not support IE and you cannot buy support for IE. All versions of IE have been forcibly removed from Win10 and it has not appeared above the noise in global browser market share stats for more than 4 years. New projects will never encouter IE, and it's vanishingly unlikely that existing applications need to support it.

- Virtual DOM was never fast.

- React backed away from incorrect, overheated performance claims almost immediately.[11]

- In addition to being unnecessary to achieve reactivity, React's diffing model and poor support for dataflow management conspire to regularly generate extra main-thread work at inconvenient times. The "solution" is to learn (and zealously apply) a set of extremely baroque, React-specific solutions to problems React itself causes.

- The only thing that react's doubled-up work model can, in theory, speed up is a structured lifecycle that helps programmers avoid reading back style and layout information at inconvenient times.

- In practice, React does not actually prevent forced layouts. Nearly every React app that crosses my desk is littered with layout thrashing bugs.

- The only defensible performance claims Reactors make for their work-doubling system are phrased as a trade; e.g. "CPUs are fast enough now that we can afford to do work twice for developer convenience."

- Except they aren't. CPUs stopped getting faster about the same time as Reactors began to perpetuate this myth. This did not stop them from pouring JS into the ecosystem in as though the old trends had held, with predictably disasterous results

- It isn't even necessary to do all the work twice to get reactivity! Every other reactive component system from the past decade is significantly more efficient, weighs less on the wire, and preserves the advantages of reactivitiy without creating horrible "re-render debugging" hunts that take weeks away from getting things done.

- Except they aren't. CPUs stopped getting faster about the same time as Reactors began to perpetuate this myth. This did not stop them from pouring JS into the ecosystem in as though the old trends had held, with predictably disasterous results

- React's thought leaders have been wrong about frontend's constraints for more than a decade.

- Why would you trust them now? Their own websites perform poorly in the real world.

- The money you'll save can be measured in truck-loads.

- Teams that correctly cabin complexity to the server side can avoid paying inflated salaries to begin with.

- Teams that do build SPAs can more easily control the costs of those architectures by starting with a cheaper baseline and building a mature performance culture into their organisations from the start.

- Not for nothing, but avoiding React will insulate your team from the assertion-heavy, data-light React discourse.

Why pick a slow, backwards-looking framework whose architecture is compromised to serve legacy browsers when smaller, faster, better alternatives with all of the upsides (and none of the downsides) have been production-ready and successful for years? ⇐

- React is legacy technology. It was built for a world where IE 6 still had measurable share, and it shows.

Frontend web development, like other types of client-side programming, is under-valued by "generalists" who do not respect just how freaking hard it is to deliver fluid, interactive experiences on devices you don't own and can't control. Web development turns this up to eleven, presenting a wicked effective compression format (HTML & CSS) for UIs but forcing most experiences to be downloaded at runtime across high-latency, narrowband connections with little to no caching. On low-end devices. With no control over which browser will execute the code.

And yet, browsers and web developers frequently collude to deliver outstanding interactivity under these conditions. Often enough, that "generalists" don't give the repeated miracle of HTML-centric Wikipedia and MDN articles loading consistently quickly, as they gleefully clog those narrow pipes with amounts of heavyweight JavaScript that are incompatible with consistently delivering good user experience. All because they neither understand nor respect client-side constraints.

It's enough to make thoughtful engineers tear their hair out. ⇐ ⇐

Tom Stoppard's classic quip that "it's not the voting that's democracy; it's the counting" chimes with the importance of impartial and objective criteria for judging the results of bakeoffs.

I've witnessed more than my fair share of stacked-deck proof-of-concept pantomimes, often inside large organisations with tremendous resources and managers who say all the right things. But honesty demands more than lip service.

Organisations looking for a complicated way to excuse pre-ordained outcomes should skip the charade. It will only make good people cynical and increase resistance. Teams that want to set bales of benajmins on fire because of frameworkism shouldn't be afraid to say what they want.

They were going to get it anyway; warts and all. ⇐

An example of easy cut lines for teams considering contemporary development might be browser support versus base bundle size.

In 2024, no new application will need to support IE or even legacy versions of Edge. They are not a measurable part of the ecosystem. This means that tools that took the design constraints imposed by IE as a given can be discarded from consideration, given that the extra client-side weight they required to service IE's quirks makes them uncompetitive from a bundle size perspective.

This eliminates React, Angular, and Ember from consideration without a single line of code being written; a tremendous savings of time and effort.

Another example is lock-in. Do systems support interoperability across tools and frameworks? Or will porting to a different system require a total rewrite? A decent proxy for this choice is Web Components support. Teams looking to avoid lock-in can remove systems that do not support Web Components as an export and import format from consideration. This will still leave many contenders, but management can rest assured they will not leave the team high-and-dry.[14] ⇐

The stories we hear when interviewing members of these teams have an unmistakable buck-passing flavour. Engineers will claim (without evidence) that React is a great[13] choice for their blog/e-commerce/marketing-microsite because "it needs to be interactive" — by which they mean it has a Carousel and maybe a menu and some parallax scrolling. None of this is an argument for React per se, but it can sound plausible to managers who trust technical staff about technical matters.

Others claim that "it's an SPA". Should it be a Single Page App? Most are unprepared to answer that question for the simple reason they haven't thought it through.[9:2]

For their part, contemporary product managers seem to spend a great deal of time doing things that do not have any relationship to managing the essential qualities of their products. Most need help making sense of the RUM data already available to them. Few are in touch with the device and network realities of their current and future (🤞) users. PMs that clearly articulate critical-user-journeys for their teams are like hen's teeth. And I can count on one hand teams that have run bakeoffs — without resorting to binary. ⇐

It's no exaggeration to say that team leaders encountering evidence that their React (or Angular, etc.) technology choices are letting down users and the business go through some things.

Follow-the-herd choice-making is an adaptation to prevent their specific decisions from standing out — tall poppies and all that — and it's uncomfortable when those decisions receive belated scrutiny. But when the evidence is incontrovertible, needs must. This creates cognitive dissonance.

Few teams are so entitled and callous that they wallow in denial. Most want to improve. They don't come to work every day to make a bad product; they just thought the herd knew more than they did. It's disorienting when that turns out not to be true. That's more than understandable.

Leaders in this situation work through the stages of grief in ways that speak to their character.

Strong teams own the reality and look for ways to learn more about their users and the constraints that should shape product choices. The goal isn't to justify another rewrite but to find targets team can work towards, breaking down complexity into actionable next steps. This is hard and often unfamiliar work, but it is rewarding. Setting accurate goalposts can also help the team take credit as they make progress remediating the current mess. These are all markers of teams on the way to improving their performance management maturity.

Others can get stuck in anger, bargaining, or depression. Sadly, these teams are taxing to help; some revert to the mean. Supporting engineers and PMs through emotional turmoil is a big part of a performance consultant's job. The stronger the team's attachment to React community narratives, the harder it can be to accept responsibility for defining success in terms that map directly to the success of a product's users. Teams climb out of this hole when they base constraints for choosing technologies on users' lived experiences within their own products.

But consulting experts can only do so much. Tech leads and managers that continue to prioritise "Developer Experience" (without metrics, natch) and "the ecosystem" (pray tell, which parts?) in lieu of user outcomes can remain beyond reach, no matter how much empathy and technical analysis is provided. Sometimes, you have to cut bait and hope time and the costs of ongoing failure create the necessary conditions for change. ⇐

Most are substituting (perceived) popularity for the work of understanding users and their needs. Starting with user needs creates constraints that teams can then use to work backwards from when designing solutions to accomplish a particular user experience.

Subbing in short-term popularity contest winners for the work of understanding user needs goes hand-in-glove with failures to set and respect business constraints. It's common to hear stories of companies shocked to find the PHP/Python/etc. system they are replacing with the New React Hotness will require multiples of currently allocated server resources for the same userbase. The impacts of inevitably worse client-side lag cost, too, but only show up later. And all of these costs are on top of the larger salaries and bigger teams the New React Hotness invariably requires.

One team I chatted to shared that their avoidance of React was tantamount to a trade secret. If their React-based competitors understood how expensive React stacks are, they'd lose their (considerable) margin advantage. Wild times. ⇐

UIs that works well for all users aren't charity, they're hard-nosed business choices about market expansion and development cost.

Don't be confused: every time a developer makes a claim without evidence that a site doesn't need to work well on a low-end device, understand it as a true threat to your product's success, if not your own career.

The point of building a web experience is to maximize reach for the lowest development outlay, otherwise you'd build a bunch of native apps for every platform instead. Organisations that aren't spending bundles to build per-OS proprietary apps...well...aren't doing that. In this context, unbacked claims about why it's OK to exclude large swaths of the web market to introduce legacy desktop-era frameworks designed for browsers that don't exist any more work directly against strategy. Do not suffer them gladly.

In most product categories, quality and reach are the product attributes web developers can impact most directly. It's wasteful, bordering insubbordinate, to suggest that not delivering those properties is an effective use of scarce funding. ⇐

Should a site be built as a Single Page App?

A good way to work this question is to ask "what's the point of an SPA?". The answer is that they can (in theory) reduce interaction latency, which implies many interactions per session. It's also an (implicit) claim about the costs of loading code up-front versus on-demand. This sets us up to create a rule of thumb.

Sites should only be built as SPAs, or with SPA-premised technologies if (and only if):

- They are known to have long sessions (more than ten minutes) on average

- More than ten updates are applied to the same (primary) data

This instantly disqualifies almost every e-commerce experience, for example, as sessions generally involve traversing pages with entirely different primary data rather than updating a subset of an existing UI. Most also feature average sessions that fail the length and depth tests. Other common categories (blogs, marketing sites, etc.) are even easier to disqualify. At most, these categories can stand a dose of progressive enhancement (but not too much!) owing to their shallow sessions.

What's left? Productivity and social apps, mainly.

Of course, there are many sites with bi-modal session types or sub-apps, all of which might involve different tradeoffs. For example, a blogging site is two distinct systems combined by a database/CMS. The first is a long-session, heavy interaction post-writing and editing interface for a small set of users. The other is a short-session interface for a much larger audience who mostly interact by loading a page and then scrolling. As the browser, not developer code, handles scrolling, we omit from interaction counts. For most sessions, this leaves us only a single data update (initial page load) to divide all costs by.

If the denominator of our equation is always close to one, it's nearly impossible to justify extra weight in anticipation of updates that will likely never happen.[12]

To formalise slightly, we can understand average latency as the sum of latencies in a session, divided by the number of interactions. For multi-page architectures, a session's average latency () is simply a session's summed 's divided by the number of navigations in a session ():

SPAs need to add initial navigation latency to the latencies of all other session interactions (). The total number of interactions in a session is:

The general form is of SPA average latency is:

We can handwave a bit and use for each individual update (via the Performance Timeline) as our measure of in-page update lag. This leaves some room for gamesmanship — the React ecosystem is famous for attempting to duck metrics accountability with scheduling shenanigans — so a real measurement system will need to substitute end-to-end action completion (including server latency) for , but this is a reasonable bootstrap.

also helpfully omits scrolling unless the programmer does something problematic. This is correct for the purposes of metric construction as scrolling gestures are generally handled by the browser, not application code, and our metric should only measure what developers control. SPA average latency simplifies to:

As a metric for architecture, this is simplistic and fails to capture variance, which SPA defenders will argue matters greatly. How might we incorporate it?

Variance () across a session is straightforward if we have logs of the latencies of all interactions and an understanding of latency distributions. Assuming latencies follows the Erlang distribution, we might have work to do to assess variance, except that complete logs simplify this to the usual population variance formula. Standard deviation () is then just the square root:

Where is the mean (average) of the population , the set of measured latencies in a session.

We can use these tools to compare architectures and their outcomes, particularly the effects of larger up-front payloads for SPA architecture for sites with shallow sessions. Suffice to say, the smaller the deonominator (i.e., the shorter the session), the worse average latency will be for JS-oriented designs and the more sensitive variance will be to population-level effects of hardware and networks.

A fuller exploration will have to wait for a separate post. ⇐ ⇐ ⇐

Certain frameworkists will claim that their framework is fine for use in informational scenarios because their systems do "Server-Side Rendering" (a.k.a., "SSR").

Parking for a moment discussion of the linguistic crime that "SSR" represents, we can reject these claims by substituting a test: does the tool in question send a copy of a library to support SPA navigations down the wire by default?

This test is helpful, as it shows us that React-based tools like Next.js are wholly unsuitable for this class of site, while React-friendly tools like Astro are appropriate.

We lack a name for this test today, and I hope readers will suggest one. ⇐

React's initial claims of good performance because it used a virtual DOM were never true, and the React team was forced to retract them by 2015. But like many retracted, zombie ideas, there seems to have been no reduction in the rate of junior engineers regurgitating this long-falsified idea as a reason to continue to choose React.

How did such a baldly incorrect claim come to be offered in the first place? The options are unappetising; either the React team knew their work-doubling machine was not fast but allowed others to think it was, or they didn't know but should have.[15]

Neither suggest the sort of grounded technical leadership that developers or businesses should invest heavily in. ⇐

It should go without saying, but sites that aren't SPAs shouldn't use tools that are premised entirely on optimistic updates to client-side data because sites that aren't SPAs shouldn't be paying the cost of creating a (separate, expensive) client-side data store separate from the DOM representation of HTML.

Which is the long way of saying that if there's React or Angular in your blogware, 'ya done fucked up, son. ⇐

When it's pointed out that React is, in fact, not great in these contexts, the excuses come fast and thick. It's generally less than 10 minutes before they're rehashing some variant of how some other site is fast (without traces to prove it, obvs), and it uses React, so React is fine.

Thus begins an infinite regression of easily falsified premises.

The folks dutifully shovelling this bullshit aren't consciously trying to invoke Brandolini's Law in their defence, but that's the net effect. It's exhausting and principally serves to convince the challenged party not that they should try to understand user needs and build to them, but instead that you're an asshole. ⇐

Most managers pay lip service to the idea of preferring reversible decisions. Frustratingly, failure to put this into action is in complete alignment with social science research into the psychology of decision-making biases (open access PDF summary).

The job of managers is to manage these biases. Working against them involves building processes and objective frames of reference to nullify their effects. It isn't particularly challenging, but it is work. Teams that do not build this discipline pay for it dearly, particularly on the front end, where we program the devil's computer.[2:1]

But make no mistake: choosing React is a one-way door; an irreversible decision that is costly to relitigate. Teams that buy into React implicitly opt into leaky abstractions like timing quirks of React's (unique; as in, nobody else has one because it's costly and slow) synthentic event system and non-portable concepts like portals. React-based products are stuck, and the paths out are challenging.

This will seem comforting, but the long-run maintenance costs of being trapped in this decision are excruciatingly high. No wonder Reactors believe they should command a salary premium.

Whatcha gonna do, switch? ⇐

Where do I come down on this?

My interactions with React team members over the years have combined with their confidently incorrect public statements about how browsers work to convince me that honest ignorance about their system's performance sat underneath misleading early claims.

This was likely exascerbated by a competitive landscape in which their customers (web developers) were unable to judge the veracity of the assertions, and a deference to authority; surely Facebook wouldn't mislead folks?

The need for an edge against Angular and other competitors also likely played a role. It's underappreciated how tenuous the position of frontend and client-side framework teams are within Big Tech companies. The Closure library and compiler that powered Google's most successful web apps (Gmail, Docs, Drive, Sheets, Maps, etc.) was not staffed for most of its history. It was literally a 20% project that the entire company depended on. For the React team to justify headcount within Facebook, public success was likely essential.

Understood in context, I don't entirely excuse the React team for their early errors, but they are understandable. What's not forgivable are the material and willful omissions by Facebook's React team once the evidence of terrible performance began to accumulate. The React team took no responsibility, did not explain the constraints that Facebook applied to their JS-based UIs to make them perform as well as they do — particularly on mobile — and benefited greatly from pervasive misconceptions that continue to cast React is a better light than hard evidence can support. ⇐